Enterprise storage industry goes through cycles of innovations and boredom. For a long time, storage was only an afterthought and people focused primarily on computing. Then, in the 1990s, storage and networking became important — think EMC and Cisco. Fibre channel, SAN (Storage Area Network), NAS and iSCSI became vogue in the late 1990s and early 2000s; disks were getting faster; novel ideas such as object storage came into being; and cloud computing demanded vast storage.

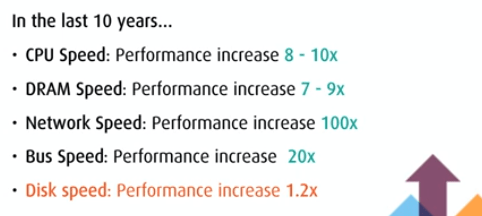

Then storage reached a plateau around 2008.

Hard drives couldn’t spin any faster than 15K rpm; technology such as Hadoop and distributed computing seemed to imply that storage had become a commodity; and there were no breakthroughs in protocols. Drives got bigger, but slower, which meant that once again it was all about computing — just get faster CPUs with more memory to handle the workload. Another “solution” was just to add more spindles and use expensive solutions such as Raid 1-0.

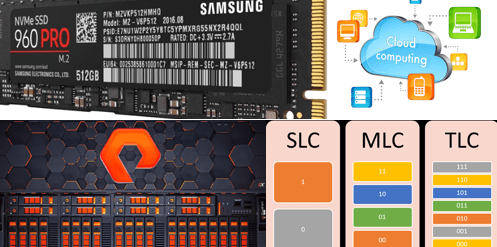

Enter SSD (Solid State Disks). These non-volatile flash memory drives are magnitudes of order faster than spinning hard drives. The latency is low in the order of sub-milliseconds; and data transfer — both IOPs and bandwidth — is out of this world. It’s like when the world went from boomboxes to iPods. The digital transformation was ready to invade the storage industry.

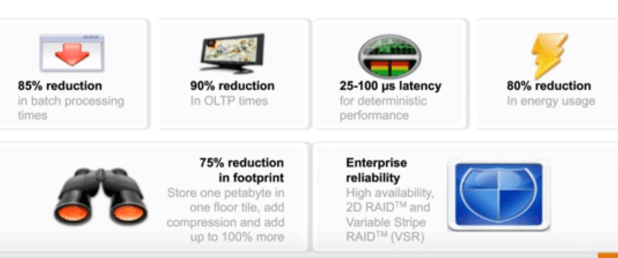

Flash drives and arrays have enormous advantages in performance as well as reduction in space in data centers (which also means cost saving in power/cooling).

BUT … there was one big problem: price. SSDs were 100x more expensive than HDD (per unit capacity). This meant that storage arrays based on flash drives were akin to Ferraris of storage systems. Only customers with a lot of money and unique requirements for high performance could afford all-flash systems.

Inflexion Point for SSD — Declining Price, New Protocol

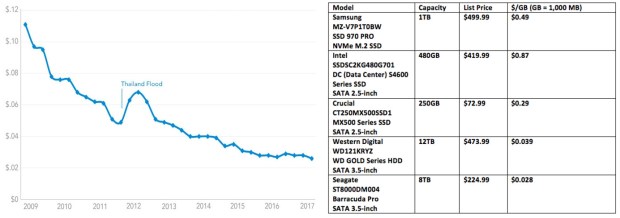

However, that’s beginning to change now. In fact, we are at a tipping point now where all-flash storage arrays are becoming more affordable and competitive. Also, technical geeks have come up with a new protocol, NVMe, which is light-weight and much faster than the old SCSI/SAS/SATA protocols. Given these two factors, it’s very likely that within the next 5-10 years, flash drives and flash arrays will become the norm.

SSD Cost

Wait. Right now, SSD’s are still 10x more expensive than HDD’s — about 30 cents a GB for an SSD, compared to 3 cents a GB for an HDD.

Does that mean the flash systems are still relegated to only niche markets? Yes and No. For one, some customers evaluate the cost not “per GB” but “per I/O.” Thus, if one system can perform 10x better than another one, customers will be willing to pay 10x for the first system. Of course, flash cannot outperform HDD 10x in all circumstances — in sequential reads and writes, HDD can do as good a job as an SSD.

SSD Problems

Moreover, an SSD has three huge drawbacks: one, there are only limited number of writes/rewrites (also called program-erase or P-E) cycles that an SSD can handle before it dies; second, write/rewrite cycle often involves moving data around since an entire block has to be erased before any particular pages within the block can be updated; third, a big chunk of the SSD drive can be wasted (reserved) for moving data during garbage collection.

Wear Leveling and Garbage Collection

The first problem (limited P-E cycles) is handled via Wear Leveling. This is a bit like a person switching between multiple pairs of shoes to ensure that all shoes experience the same level of wear and tear. If data in some blocks experience a lot of rewrites/updates, then the data is moved to other blocks that haven’t been so busy.

The second problem is handled through garbage collection. If a block has a lot of stale data and only some valid data, then first the valid data is moved to a new location, and then the entire block is erased. This makes the entire block available for new data. Here’s a nice pic from an article:

Over-Provisioning and Write Amplification

The garbage collection requires a separate area within the SSD where data can be temporarily relocated. This extra space can amount to anywhere from 7% to 28% of the usable capacity. This is called over-provisioning.

All the extra writes in moving data around and keeping track of them through metadata can lead to a lot of extra writes, which are time-consuming and damaging. This problem is referred to as write amplification.

Handling SSD Problems

All-flash vendors like Pure Storage (FlashArray), HP (Nimble), Dell-EMC (ExtremeIO) have their own ways of handling the SSD problems. Some like Pure Storage do it at the software level –their Purity software that runs on the storage controllers take charge of the data layouts on the drives. Most others let the SSD drives handle the issues such as wear leveling and garbage collection.

The advantage of Pure is that their intelligent software allows them to use cheaper, consumer-grade SSD’s that use MLC technology. Other vendors use more expensive SSD’s which are based on SLC and eMLC technology. (here’s a nice article describing the differences between the various NAND flash technologies).

Conclusion

Thanks to the falling prices of SSD’s, newer protocols (NVMe and NVMe-over-Fabrics) — that were specifically built for flash memory — and smarter algorithms/software, all-flash storage arrays are about to take off. Storage as a weak link in the datacenter is about to become history. This is also perfect timing, since AI is becoming more mature, and 5G and IoT are just around the corner. An explosion of data is about to be met with lightning-speed storage arrays!

Author: Chris Kanthan