NVMe — “Non Volatile Memory Express” — is a protocol that made its appearance four years ago and has now reached enough maturity and wide adoption to become a major player in the world of flash arrays.

Here are some quick highlights of NVMe:

- It was specifically designed ground up for NVRAM SSD’s. Big names like Dell, EMC, Oracle, Micron, SanDisk were all involved in developing the specs.

- It’s a light-weight, low latency, high IOPs and high bandwidth protocol

- It runs natively on PCIe as well as on fabrics (Ethernet, Fibre Channel etc.)

- Compared to spinning drives (HDD) based on SCSI/SAS and AHCI/SATA, it has much better performance (25x or more in some cases)

- While SAS/SATA drives handle one queue with 64/32 requests per queue respectively, NVMe can theoretically handle whopping 64,000 queues and 64,000 commands per each queue! This parallelism is a unique feature of NVMe that allows it to scale.

- Because NVMe drives plug into the PCIe, the CPU of the storage controller can directly talk to the SSD. This means more performance and much less use of CPU cycles (half as many CPU cycles, to be precise)

- NVMe has only 13 major commands, which makes it more efficient than AHCI (100+ commands) or SCSI (400+ commands). Less “blah blah,” more actions!

- NVMe-oF is the shorter name for “NVMe Over Fabric.” More products and options in this area are just around the corner, which will make NVMe more ubiquitous.

- To run NVMe over the Ethernet fabric, the protocol called ROCE is used. ROCE stands for RDMA Over Converged Ethernet. ROCE v2 has the ability to use switches and routing. (To connect a server to a flash array using NVMe over Ethernet or FC, the server would obviously need a special NIC or an HBA respectively)

- NVMe drivers are available for all the major OS and VMWare ESXi

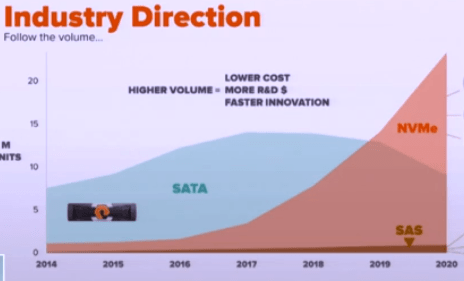

Pretty neat features, right? Expect NVMe devices/arrays and NVMe fabrics to skyrocket in the coming years!

Author: Chris Kanthan